Description

📜 Description:

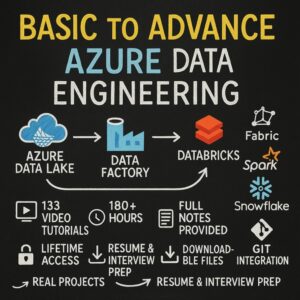

You’ll start with the core architecture and workspace setup of Azure Databricks, then move to big data processing using Apache Spark. The course covers ETL workflows, Delta Lake for data reliability, and connecting Databricks to leading data storage and analytics services such as Snowflake, Azure Data Lake Storage (ADLS), Azure Synapse Analytics, and Azure SQL Database.

Through hands-on projects, you’ll implement real-world data pipelines, automate workflows, and create end-to-end analytics solutions — preparing you for data engineering and big data analytics roles in Azure environments.

✅ What You Will Learn

📊 Foundations & Setup

-

Azure Databricks Workspace Setup & Architecture

-

Connecting Databricks with Azure Storage & Data Lake (ADLS)

-

Apache Spark Basics & PySpark for Data Processing

⚡ Data Engineering with Databricks

-

ETL (Extract, Transform, Load) Pipelines in Databricks

-

Delta Lake for Data Consistency & Reliability

-

Batch & Streaming Data Processing in Databricks

🔗 Database & Service Integrations

-

Reading & Writing Data from Snowflake

-

Integrating with Azure Data Lake Storage (ADLS)

-

Working with Azure Synapse Analytics

-

Connecting to Azure SQL Database

🧪 Hands-On Projects

-

Real-Time Data Pipeline using Azure Databricks & Delta Lake

-

Integration Project with Snowflake, ADLS, and Synapse

-

End-to-End Data Engineering Project with Azure Databricks

👤 Who Is This Course For?

-

Data engineers who want to specialize in Azure Databricks

-

Developers & analysts transitioning into cloud-based big data

-

Professionals wanting to build scalable ETL pipelines

-

Students & professionals preparing for Azure Databricks certifications

-

Anyone interested in building advanced analytics solutions on Azure

Complete PySpark – Big Data Processing with Apache Spark

Complete PySpark – Big Data Processing with Apache Spark

Reviews

There are no reviews yet.